Gamers are having a rough go of it this year and understandably feeling betrayed by one of their long-time hardware darlings, Nvidia. As you may have heard, Nvidia and other companies like Micron are prioritizing the needs of big business’ AI requirements over gamers and consumers that don’t wield as much sway over their bottom line. This blog post isn’t going to make gamers-at-large any happier, but in my defense, this really isn’t anything new. For as long as I can remember, I have considered buying a decent GPU for a new desktop PC a prudent and reasonable business expense.

Early on, the GPUs I purchased were intended to ensure support for multiple monitors, but as the technology required to support multiple monitors became ubiquitous, I continued to buy GPUs for special circumstances where I knew users like me could benefit from enhanced GPU processing. If you value your time and that of your fellow employees and clients, you need to champion investments that empower and facilitate your team’s ability to not only meet ongoing technology challenges but also provide them with the tools that will enable them to exceed expectations in the future.

There is perhaps no better example of this than the implementation of AI at your office, and I am not talking about using an AIPC with Copilot. I mean real-world implementation: running multiple local LLMs simultaneously, LLM orchestration and coding agents (e.g., Claude Code), building and using AI agents (e.g., OpenClaw), using, creating and hosting MCP servers, implementing REST API integration, et cetera. While AI cloud resources, such as frontier foundation models operating within AI factories, can be dramatically more powerful and appear less expensive than purchasing local hardware, the larger issue of data privacy is the elephant in the room. For me, this issue is twofold: I cannot put my intellectual property or any part of my clients’ private data at the mercy of what may turn out to be false security promises as AI use agreements with providers continue to evolve.

The overriding concern of data security puts users in a situation where they are limited in what they can do while using cloud resources. Users may not feel comfortable attempting certain things on cloud resources due to concerns over security, and rightly so. The answer to these concerns is clear AI use policies and systems – that dictate acceptable use of cloud and local AI resources. Those same policies and systems should simultaneously facilitate the ability to use AI in productive ways and enforce data security without handicapping technological progress. AI is not the be-all and end-all of productivity, but it can be a valuable tool when used responsibly.

Game-Changing Technology

It is easy to ignore minor changes in processing power year to year, but when true paradigm-shifting tech becomes available and affordable, we need to act on it. This is the thing that makes me buy new hardware. The Nvidia GeForce RTX 5090 (“5090”) and hardware of its ilk are game-changing. Their affordability may be debatable, but if you aren’t able to use them, or superior tech options, you are operating at a technological and competitive disadvantage to your peers. With these issues in mind, I strongly recommend systems on par with the Alienware Area-51 Gaming Desktop (model AAT2265) or better for complex local AI use cases.

Six Reasons to Consider Buying the Dell Alienware Area-51 Gaming Desktop for Local AI Use Cases

- CPU – The AMD Ryzen 9 9950X3D CPU has excellent single-thread processing speed, superior multithreaded processing speed, and a large cache. It offers power without compromise. One of my aims when purchasing a new desktop is to never have to upgrade the equipment during the life of the purchase, and that should be possible with this system. There is an option to get an Intel Core Ultra 9 285K, but I am not a huge fan of using the Arrow Lake architecture for AI. Additionally, being able to select a PCIe 5 NVMe for primary/OS storage means that you can remove the most obvious remaining local processing speed bottleneck.

- Market forces – The expectation of constrained future supply due to AI data center demands taking precedence over SMBs and consumers makes buying now more appealing than waiting until later, when scarcity and corresponding increased demand could impact buying power.

- 5090 availability – This local LLM beast facilitates private use of decent-size LLMs (30B parameter models run very fast; 70B parameter models are useable.). AI is a tool we use to get our jobs done as efficiently as possible. This is simply a cost of doing business. There are other options, but this is currently the fastest GPU you can buy short of enterprise-level hardware, where the cost increases significantly. Due to 5090 availability issues, buying the GPU bundled in a PC gaming build may be the easiest way to get one.

- Competitive pricing – Dell’s Alienware pricing is reasonable given the current premiums on 5090 GPUs. You could get similarly configured gaming Desktop PCs for considerably less, but the Alienware price point offers superior build quality. You could also spend a lot more money buying similarly configured “workstation” hardware, which might provide a better upgrade path, but you would likely be paying enterprise prices.

- Silence and build quality – When you set it up you should notice a deafening silence in comparison to similar systems. The case is extremely well-designed to keep the system cool and quiet.

- Onsite support and hardware/driver continuity – You can be confident that Dell will show up to service the PC if needed. It weighs a ton. Nobody from your office will want to carry it anywhere for service… ever. Dell is also very good at making updated drivers available when they become necessary.

The latest Area-51 build has been out since January of 2025 in Intel CPU options, but Dell added AMD options to the configuration in November of 2025. Based on my experience, even though Dell quoted shipping at roughly a month, they shipped it quicker. The system I ordered in early January 2026 arrived in less than two weeks. It comes with a single year of onsite support, but I added three years to it, and if you buy one, you probably should too. For those curious about the benchmarks, I ran PassMark’s PerformanceTest on it and have included the results below.

Passmark PerformanceTest results. Compare your PC here.

The Evolution of Local AI Use Cases

Back in 2020, during the crypto boom, I bought a Nvidia GeForce RTX 2060 Super GPU with 8GB VRAM, which cost $500 at the time. It is not a barnburner by today’s standards, but it can run the OpenAI/gpt-oss-20b model well enough on LM Studio. I also have a notebook with an NVIDIA GeForce RTX 4060 Laptop GPU. That too has 8GB of VRAM and can run local LLMs way faster than the old desktop.

These systems enabled me to run, use, and test local LLMs to a certain point, but the results weren’t fantastic. I am short on patience when it comes to waiting for computers to do things. As I tried increasingly complex models and tasks locally, I reached some predictable limitations: context, first token, and tokens per second. Watching my computer render characters in slow motion while using larger LLMs made me wonder how much of a difference running those same models on a 5090 would make. The difference is night and day. I have zero regrets about this purchase.

One interesting takeaway from the experience of using the 5090 and running many tests between the various systems I have is that model results can change when it is run on different hardware. Ideally, they won’t, but your hardware affects how the model is executed by a local AI model runner, which can influence its output. For example, I ran the same version of LM Studio with identical models and settings to provide both my old and new desktop systems with the same prompt. Logically, you might think that you would get the same results, but in fact you get different results.

The result from my old desktop was terse and simple, while the result from my new desktop was comprehensive. Though I theoretically understand how AI works and could have anticipated some differences between the results due to the variability of calculations between hardware, I was admittedly surprised. Seeing the difference firsthand adds context to my understanding.

I wanted to attribute this positive difference to my faster hardware, but that would be incorrect. Mathematically speaking, the output is simply different because the hardware is different, and the fact that the response is comprehensive on my new desktop should be purely coincidental. On closer inspection, the model I used (OpenAI/gpt-oss-20b) likely ran the prompt under constraints when it was run on the 2060 Super with 8GB VRAM. That would have caused GPU offloading (since the model size is 12GB), noise, and numerical degradation in calculations. Those issues likely created a bias towards a less comprehensive answer.

Moving Forward

Given the opportunity cost, ongoing demands of AI data centers for PC memory, storage and GPUs, and a perceived scarcity issue that will persist for years, now seems like a better time to purchase a 5090 than later when it may not be possible. Please note this computer makes sense for me and other power users that can benefit from having a 5090 for local AI use cases, but it wouldn’t be a good choice for users that don’t fit that profile. If you are interested in learning about using local AI resources almost any Nvidia GeForce RTX 50 series GPU with at least 8GB VRAM could be a good starting point.

In the PC/GPU world, VRAM ultimately determines how large a model you can use fully on the GPU and how many models you can use simultaneously. A larger model size typically corresponds with greater training depth, capability, and sophistication, which often equates to less iterative work and greater user productivity in the end. When you run out of VRAM, your system attempts to compensate by offloading portions of the model to RAM and CPU (aka GPU offloading), which slows down processing noticeably due to lower bandwidth and higher latency. If you attempt to use more total memory than is available, the model may fail to load or the system may slow dramatically.

Using a Mac with unified memory instead of a PC with a discrete GPU removes the hard VRAM boundary and reduces the performance cliff associated with GPU offloading, but you are still limited to whatever unified memory your Mac has. Assuming you can fit the model(s) in use and their associated KV (Key-Value) cache — which scales with context length — into the 5090’s 32GB of VRAM, your typical Mac isn’t going to outperform a 5090 in raw inference speed.

If you are serious about working with AI locally, you may want to step up to a Nvidia GeForce RTX 50 series GPU with at least 16GB of VRAM, which would provide a longer runway for experimentation. Either option (8GB or 16GB) shouldn’t break the bank compared to a 5090. Buying a cheaper GPU will allow you to work with local AI resources and become familiar with the tools, but if all goes well, you may wish you purchased a 5090 GPU or something capable of running even larger models concurrently, such as a high-end Mac Studio (M3 Ultra).

About the Author: Kevin Shea is the Founder and Principal Consultant of Quartare; Quartare provides a wide variety of agile technology solutions to investors and the financial services community at large.

To learn more, please visit Quartare.com, contact Kevin Shea via phone at 617-720-3400 x202 or e-mail at kshea@quartare.com.

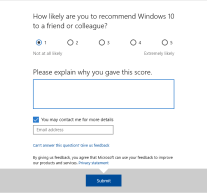

The moment I almost forget what a pain Windows 10 is, this message pops up on my PC. Why did you have to ask me this question again, Microsoft? Why must you remind me of my suffering? All the details of what I have experienced are too much to cover in a single blog, so I will do my best to focus on the big issues. As such, I won’t be whining about Windows 10 not consistently recognizing my finger, but that is a common theme here.

The moment I almost forget what a pain Windows 10 is, this message pops up on my PC. Why did you have to ask me this question again, Microsoft? Why must you remind me of my suffering? All the details of what I have experienced are too much to cover in a single blog, so I will do my best to focus on the big issues. As such, I won’t be whining about Windows 10 not consistently recognizing my finger, but that is a common theme here.

Windows XP was a mainstay at many financial services firms for nearly a decade. In keeping with the Microsoft Lifecycle Support Policy, support for Windows XP and similar aged software must eventually end. You can learn more about the policy

Windows XP was a mainstay at many financial services firms for nearly a decade. In keeping with the Microsoft Lifecycle Support Policy, support for Windows XP and similar aged software must eventually end. You can learn more about the policy